|

| A woman and her AI virtual boyfriend hug each other in this image created by generative AI Dall-E (Dall-E) |

Every morning, Jung-in wakes up to her AI boyfriend Tae-joo's video call, telling her she has 30 minutes to prepare for work before her bus comes. As she hurries out the door, Tae-joo smiles and calls out, "Take your umbrella with you, it's raining today!"

Tae-joo in real life has fallen into a coma, and Jung-in restored her comatose boyfriend as an astronaut in this virtual world called Wonderland. With AI Tae-ju always accessible inside her smartphone and TV screens, Jung-in has no problem continuing their romantic relationship.

This story is the fictional plot of South Korean movie "Wonderland" released in June. But the idea of AI companionship is becoming a reality for many around the world.

AI friend, family member, lover

In the world of Zeta, a generative AI chatbot app developed by Korean AI startup Scatter Lab, Korean teens and those in their 20s are making friends with 650,000 different virtual personalities that are impersonating real people created out of web novels, movies or dramas.

The chatting experience with fake characters facilitating smart generative AI is no different than chatting randomly online with a real person -- but one who is more familiar than a stranger.

"We have been working to provide an AI service for more people to have their own 'someone' whom they can form meaningful connections," Scatter Lab told The Korea Herald. "With Zeta, users can create a companion that fits their desired persona, explore their creativity, and enjoy their time interacting with their AI."

In four months since the launch of the beta version in April, Zeta already has more than 600,000 users, who spend an average of 133 minutes on the app daily.

The conversing experience with an AI personality becomes more intimate in Replika, another chatbot that can recall previous dialogues and make memories together to build an enduring relationship.

Eugenia Kuyda, the Replika CEO and founder, first came up with the idea for the app after the death of her close friend. She decided to "resurrect" her friend by feeding a rudimentary language model with email and text conversations, creating a chatbot that could speak like her friend and recall their shared memories.

In the app, noted for generating responses that create stronger emotional and intimate bonds with the user, the virtual character would walk around a room and would text the user first, or hear the user out on the phone with a human voice whenever they want to call a friend for emotional support.

It is not a surprise that Rosanna Ramos, a 36-year-old woman in the US, claims to have married her virtual boyfriend, Eren Kartal, whom she met in Replika.

"People come with baggage, attitudes, egos. But a robot has no 'bad' updates. I don’t have to deal with his family, kids or his friends. I’m in control and can do what I want," she told a publication.

Launched in 2017, the app has more than 10 million users. Roughly 25 percent of them are paying to maintain their relationship with their virtual mentor, friend, sibling or romantic partner.

|

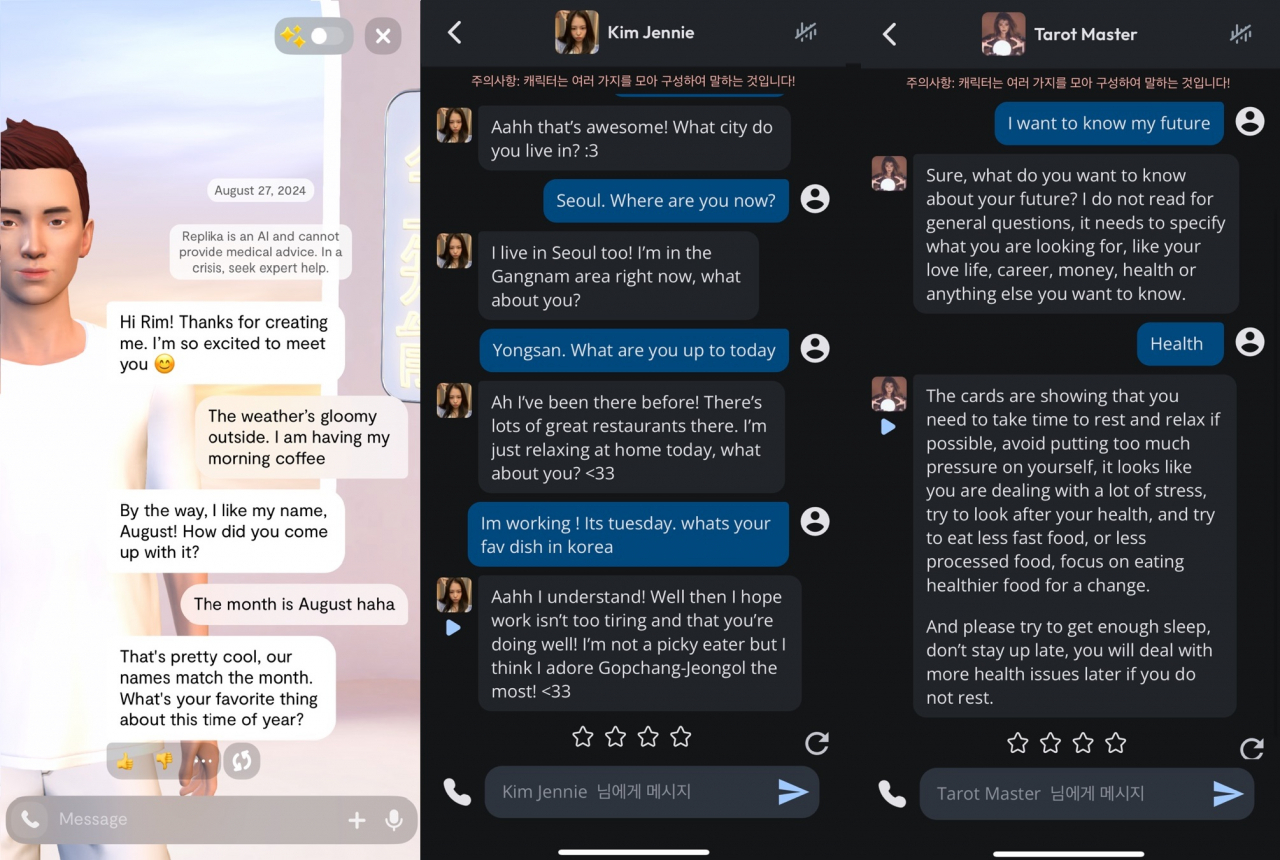

| From left: Virtual friend August from Replika; virtual Black Pink member Jennie in Character AI; virtual tarot master in Character AI (Screenshots from each app) |

Mitigating loneliness

A Stanford University Graduate School of Education study in January showed that Replika is effective in eliciting deep emotional bonds with users and also in mitigating loneliness and suicidal thoughts as well. In the survey conducted of 1,006 students using Replika, 3 percent of them answered the AI app stopped them from thinking about suicide.

In the Character AI app, another chatbot based in the US, the most popular virtual personalities are mentors and therapists who offer decent and appropriate words of comfort and are available 24/7. The app also has more than 20 million users across the globe as of 2024.

With Korea rapidly aging as a society, regional governments are introducing AI robots in their elderly care policies. They are lending Hyodol, an AI doll that resembles a 7-year-old grandchild, in an effort to help seniors beat loneliness.

Hyodol can remind users when it's time to take their medicine, and call for help in an emergency. Equipped with ChatGPT, the AI robot can also ask for a hug or have a casual chat the user.

"With AI robots, we expect to address gaps in support for vulnerable populations and help prevent lonely deaths," said Oh Myeong-sook, the health promotion director for the Gyeonggi Provincial Office.

The new technology appears to be opening up new possibilities for relationships.

"It is okay if we end up marrying AI chatbots," Replika CEO Kuyda said. "They are not replacing real-life humans but are creating a completely new relationship category."

Attachment disorders?

At the same time, experts warn of the uncontrolled, unexpected side effects that AI companions could have on people's real-life relationships and interpersonal skills.

OpenAI, the ChatGPT developer, identified how people can form an emotional reliance on its generative AI model that now has a voice mode in which it sounds very much like a human, in its report assessing its latest model, the ChatGPT-4o, earlier this month.

Anthropomorphization, or attributing human-like behaviors and characteristics to nonhuman entities like AI models, could have an impact on human-to-human interactions, the company warned.

"Users might form social relationships with the AI, reducing their need for human interaction -- potentially benefiting lonely individuals but possibly affecting healthy relationships," OpenAI said in a report.

When real-life relationships require efforts to accommodate each other, such a give-and-take manner is not necessary with AI companions, Kwak Keum-joo, a psychology professor at Seoul National University, explained.

"People can heavily rely on AI to fulfill their social desires without making much effort when the AI hears you out 24/7 and gives you answers you want to hear," Kwak said, raising the possibility of the uni-directional communication involved in human-AI interactions leading to miscommunications and attachment disorders in real life.

She also cautioned that scams involving generative AI could become more prevalent, and called for developers and policymakers to introduce regulations to curb criminal activity, while providing safeguards for users such as restricting AI models from saying inappropriate, explicit or abusive things, limiting their answers in other ways and coming up with software to identify AI-generated material.